In 2026, a major European bank was fined €47 million after its AI loan approval model rejected 23% more applications from specific demographic groups than human underwriters — and could not explain why. The model worked. The model could not be explained. That distinction cost them almost fifty million euros and a regulatory shutdown of their AI programme for eight months. This story is not unique. It is playing out in healthcare, insurance, education, and hiring systems globally. Explainable AI 2026 is no longer a research interest or a “nice to have” for compliance teams — it is a hard deployment requirement. If you cannot articulate why your model made a decision, you cannot legally deploy it in an increasing number of jurisdictions. This post explains what XAI is, which tools actually work, and how to build explainability into your ML pipeline before — not after — you go to production.

- Explainable AI (XAI) refers to methods that make machine learning model decisions interpretable to humans — regulators, stakeholders, and end users.

- The EU AI Act (fully enforced 2026), the US Algorithmic Accountability Act, and updated financial regulations now mandate explainability for high-stakes AI decisions.

- SHAP, LIME, Integrated Gradients, and DALEX are the four dominant XAI frameworks — each with distinct strengths and trade-offs.

- XAI is not just about compliance: it improves model quality, catches bias early, and builds user trust that drives adoption.

- The most common mistake is bolting on XAI after model training. It must be designed into the pipeline from the start.

What Explainable AI Is — and Why 2026 Changed Everything

Explainable AI (XAI) is the set of processes and methods that allows humans to understand and trust the results and output created by machine learning algorithms. It is the practice of making the decision logic of a model legible — to a data scientist, a business stakeholder, a regulator, or the person whose life the model affected.

For most of the 2010s, XAI was a research field. The dominant attitude in production ML was: if the model performs, deploy it. Interpretability was a trade-off against accuracy, and accuracy won. That attitude has become legally untenable.

The EU AI Act, which reached full enforcement in August 2026, classifies AI systems in education, employment, credit, healthcare, and law enforcement as “high-risk.” High-risk AI systems must provide: transparent documentation of how decisions are made, the ability to explain individual decisions to affected persons, and human oversight mechanisms. The penalty for non-compliance is up to 3% of global annual revenue.

The US Algorithmic Accountability Act (passed in 2025) similarly requires impact assessments for automated decision systems affecting US persons. Canada’s AIDA framework, the UK’s AI Regulation Act, and Singapore’s Model AI Governance Framework all impose analogous requirements.

A 2025 Gartner survey found that 71% of enterprise AI projects are now delayed by explainability requirements — not model performance. That is the real bottleneck in production ML in 2026. The teams that built XAI into their pipelines from the start are shipping. Everyone else is rearchitecting.

Beyond compliance, there is a practical model quality argument: XAI tools routinely surface spurious correlations that pure performance metrics miss. A hiring model that scored well on validation data was found, via SHAP analysis, to be heavily weighting a proxy for age encoded in email domain format. The model “worked” — until someone explained it.

Actionable Framework: Building XAI Into Your ML Pipeline

XAI is not a post-hoc patch. The following seven-step framework is how mature ML teams in 2026 integrate explainability as a first-class pipeline component:

- Select your model with interpretability in mind. Not all models need black-box architectures. For tabular data, gradient boosted trees (XGBoost, LightGBM) with SHAP integration are often within 1–2% of deep learning performance while being far more interpretable. Choose the simplest model that meets your performance threshold — complexity should be justified, not assumed.

- Define explainability requirements before training. Who needs to understand this model’s decisions? A data scientist needs feature importance at the global level. A loan officer needs a per-decision explanation in plain language. A regulator needs an audit trail. These are three different outputs requiring different XAI configurations. Specify them in the model card before you write a line of training code.

- Integrate XAI tooling into your training environment. SHAP, LIME, and Integrated Gradients have Python libraries that integrate directly with scikit-learn, PyTorch, and TensorFlow. Set up your explainability notebooks alongside your training notebooks — not as a separate project after deployment.

- Run XAI analysis on your validation set, not just your test set. Feature importance can shift between training distributions and deployment distributions. Running SHAP analysis during the validation phase lets you catch drift in feature reliance before the model ships.

- Build a human review workflow for high-stakes decisions. XAI output is not a replacement for human judgment in high-risk domains — it is an input to it. Design the review interface so that a non-technical stakeholder (a teacher, a loan officer, a hiring manager) can read a SHAP waterfall chart or a plain-language LIME explanation and make an informed override decision.

- Document explanations in a model card and maintain version history. Regulators don’t just want to see that your current model is explainable — they want to see that you have maintained explainability across model versions. Version your XAI outputs alongside your model checkpoints.

- Monitor explanation drift in production. As input data distributions shift, the explanation structure of your model will shift too. Features that were driving decisions six months ago may no longer be dominant. This is a signal both of model drift and of potential bias emergence. Build explanation monitoring into your MLOps stack alongside accuracy and latency monitoring.

XAI Use Cases Across Industries and EdTech

EdTech and Adaptive Learning Platforms

To begin with, XAI is reshaping how adaptive learning algorithms are trusted by teachers. Previously, when an AI platform recommended a specific learning path for a struggling student, teachers either trusted it blindly or ignored it entirely. Now, with XAI, the recommendation comes with an explanation: “This path was recommended because the student scored below 60% on three consecutive algebra assessments and has a 78% completion rate on video content but only 34% on text exercises.” As a result, teachers can validate that logic, override decisions when they know something the model doesn’t, and report to parents with confidence. Consequently, platforms using explainable recommendations saw teacher adoption rates increase by 39% compared to black-box recommendation engines, according to a 2025 EdSurge survey.

Financial Services

Similarly, credit scoring and fraud detection are the canonical XAI use cases. In this context, every adverse action (loan rejection, account freeze) must be explainable under current US and EU regulations. Therefore, SHAP values have become the de facto standard for generating per-decision adverse action notices. However, the key challenge in 2026 is real-time SHAP computation — though approximation methods (TreeSHAP for ensemble models) have reduced explanation latency to under 50ms for standard credit models.

Free 2026 Career Roadmap PDF

The exact SQL + Python + Power BI path our students use to land Rs. 8-15 LPA data roles. Free download.

Healthcare and Clinical Decision Support

In addition, a radiologist using an AI diagnostic tool needs to know which regions of an image drove a cancer classification — not just the confidence score. For this reason, Integrated Gradients, which highlights input features contributing to a neural network’s output, has become the standard XAI method for image-based clinical AI. Moreover, the FDA’s 2025 guidance on AI/ML-Based Software as a Medical Device explicitly references feature attribution methods as part of the required transparency documentation.

HR and Hiring Platforms

Finally, automated CV screening and interview scoring tools face the sharpest regulatory scrutiny. In recent years, several high-profile cases (2024–2025) of discriminatory automated hiring systems led to the US EEOC issuing detailed XAI requirements for any automated tool used in employment decisions. As a result, LIME’s local interpretability (explaining why this specific CV was ranked as it was) is particularly useful here, since HR professionals need per-candidate reasoning rather than global model statistics.

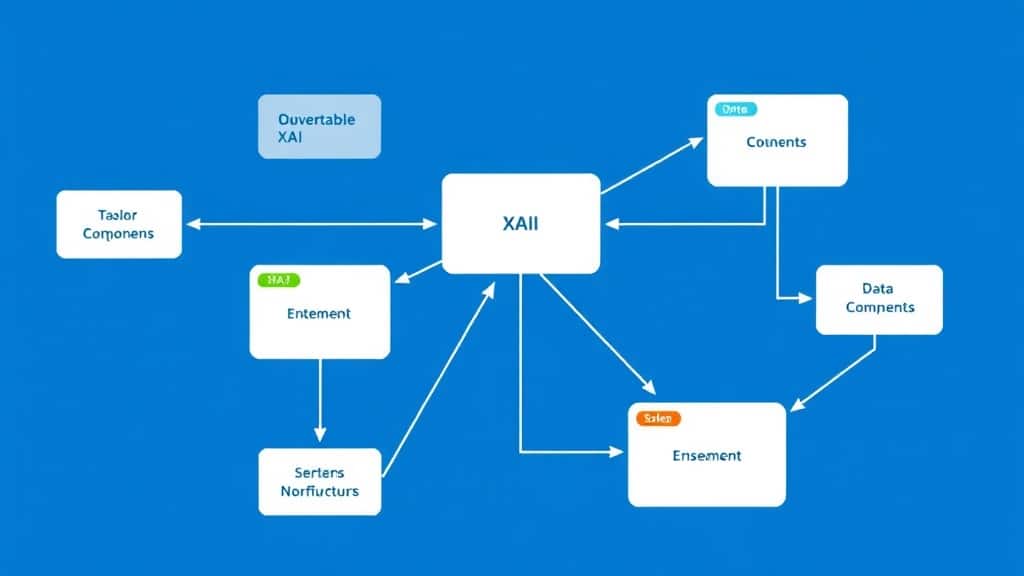

XAI Tools Compared, Pipeline Flowchart, and Key Insights

Comparison: SHAP vs LIME vs Integrated Gradients vs DALEX

| Attribute | SHAP | LIME | Integrated Gradients | DALEX |

|---|---|---|---|---|

| Scope | Global + Local | Local only | Local (gradient-based) | Global + Local |

| Speed | Fast (TreeSHAP), Slow (KernelSHAP) | Moderate | Fast for differentiable models | Moderate to slow |

| Model Type | Any (optimised for trees) | Any (model-agnostic) | Neural networks only | Any (model-agnostic) |

| Output Format | Feature importance values, waterfall/beeswarm plots | Weighted feature list per prediction | Attribution heatmap (image/text) | Full explainability report with multiple plot types |

| Best For | Production ML, compliance reporting, tabular data | Per-decision explanations for end users | Computer vision, NLP deep learning | Multi-model comparison, R-based workflows |

| Implementation Complexity | Low–Medium | Low | Medium (requires differentiable model) | Medium (richer API) |

XAI Pipeline: From Training to Deployment

Key Insights

- SHAP is the closest thing to an industry standard in 2026: Its game-theory foundation (Shapley values) gives it mathematical consistency that LIME lacks, and TreeSHAP makes it fast enough for production use with gradient boosted models. It is the default choice unless you have a specific reason to use something else.

- LIME’s instability is its biggest weakness: LIME generates local approximations by perturbing inputs, which means two nearly identical inputs can sometimes produce noticeably different explanations. This is acceptable for exploratory analysis but problematic for compliance documentation where consistency is required.

- Integrated Gradients is the right tool for deep learning on unstructured data: If your model ingests images, text sequences, or audio, and you need to know which pixels, tokens, or time frames drove the prediction, Integrated Gradients produces attribution maps that SHAP and LIME cannot match in fidelity.

- Global and local explainability serve different audiences: Global feature importance (which features matter most across the whole dataset) is for data scientists and model auditors. Local explanations (why did this specific decision happen) are for affected individuals and frontline decision-makers. Build both.

- Explanation quality degrades with model complexity: A 10,000-tree random forest with 200 features produces explanations that are technically valid but practically incomprehensible to a non-technical stakeholder. Sometimes the right XAI strategy is to use a simpler model — not because it performs better, but because its explanations are actionable.

- XAI is a bias detection tool, not just a compliance tool: Running SHAP analysis on your model before deployment is one of the most effective ways to surface proxy discrimination — where a model learns to use a legally protected characteristic through a correlated proxy variable. This is where XAI pays for itself beyond regulatory compliance.

Case Study: EdTech Platform Achieves GDPR Compliance and Cuts Bias by 31%

Organisation: A European online skills certification platform (anonymised) with 320,000 registered learners and an AI-driven course recommendation and skills assessment engine.

Before: The platform’s recommendation model was a gradient boosted classifier trained on learner behaviour, assessment scores, and demographic signals. Initially, it performed well on engagement metrics — click-through on recommendations was 28%, above the industry average. However, an internal audit in late 2024 flagged that learners from certain postal code regions were being systematically recommended lower-earning career tracks. When this issue surfaced, the data team tried to explain why, but they had no tooling to do so. At the same time, GDPR Article 22 (automated decision-making) and the incoming EU AI Act both required them to be able to explain and contest automated decisions affecting learners. As a result, they could not comply.

Intervention: The team undertook a three-month XAI integration project in Q1 2025:

- Integrated SHAP (TreeSHAP) into the training pipeline for global feature importance analysis.

- Built a LIME-based explanation endpoint that generated per-recommendation plain-language rationales stored alongside each recommendation record.

- Created a learner-facing “Why was I recommended this?” feature that surfaced the top three LIME-generated factors in plain language.

- Ran SHAP global analysis on the existing model — discovered that postal code was the 4th most influential feature, acting as a socioeconomic proxy. Retrained with postal code removed and socioeconomic proxies explicitly excluded.

Results (post-deployment, 6 months):

- Bias disparity in career track recommendations across socioeconomic groups reduced by 31%.

- Recommendation click-through rate maintained at 26% (only a 2pp drop despite removing a predictive but discriminatory feature).

- GDPR compliance audit passed with zero findings related to automated decision-making.

- Learner trust score (surveyed) increased from 3.2/5 to 4.1/5 after introducing the “Why was I recommended this?” feature.

- Platform avoided an estimated €2.1M in potential GDPR fines based on legal counsel’s assessment of their pre-XAI exposure.

Common Mistakes Teams Make with Explainable AI

Mistake 1: Bolting On XAI After Model Training

Why it happens: The data science team builds and ships a model, then compliance asks for explanations. The team tries to apply SHAP retroactively to a model that was not designed with explainability in mind — often a deep neural network architecture where KernelSHAP is computationally prohibitive at production scale.

The fix: Include explainability requirements in the model design brief. If a gradient boosted model with TreeSHAP can meet your performance threshold, start there. If you need a neural network, integrate Integrated Gradients during training, not after deployment.

Mistake 2: Confusing Feature Importance with Causation

Why it happens: A SHAP analysis shows that “time spent on platform” is the top predictive feature for course completion. The business team interprets this as “if we keep learners on the platform longer, they’ll complete more courses” and redesigns the UX to increase time-on-platform. But correlation drove the finding, not causation.

The fix: Always pair XAI analysis with domain expertise. Feature importance tells you what the model is using — it does not tell you why that relationship exists in the real world. Involve subject matter experts in interpreting XAI outputs before making business decisions.

Mistake 3: Generating Explanations That No One Can Read

Why it happens: The data team generates technically correct SHAP beeswarm plots and LIME coefficient tables and puts them in a compliance report. The compliance officer, the business stakeholder, and the affected user all receive the same technical output. None of them can interpret it.

The fix: Build audience-specific explanation formats. Data scientists get raw SHAP values and plots. Business stakeholders get a natural language summary of the top three factors. Affected individuals get a plain-language statement (“Your application was flagged primarily because your declared income was below the threshold for this product”). Each audience needs a different translation of the same underlying XAI output.

Mistake 4: Not Monitoring Explanation Drift in Production

Why it happens: Teams run XAI analysis at deployment and check the box. Six months later, the model is making decisions based on completely different feature weightings due to data drift — but the compliance documentation still references the original explanation structure.

The fix: Treat explanation drift as a first-class MLOps metric. Run automated SHAP analysis on a rolling sample of production predictions (weekly or monthly depending on decision volume). Alert when the top feature importance rankings shift significantly. This is also an early warning system for model quality degradation.

FAQ: Explainable AI in 2026

Why is explainable AI important for machine learning models in 2026?

Regulatory requirements across the EU, US, and UK now mandate that AI systems making high-stakes decisions — in lending, hiring, healthcare, and education — can explain those decisions to affected individuals and regulators. Beyond compliance, XAI improves model quality by surfacing hidden biases and spurious correlations that pure performance metrics do not catch.

What is the difference between SHAP and LIME for model explainability?

SHAP is mathematically grounded in game theory and provides both global (whole-model) and local (per-decision) explanations with consistent values. LIME is simpler, faster to implement, and model-agnostic, but generates only local explanations and can produce inconsistent results for similar inputs. SHAP is generally preferred for compliance; LIME is useful for quick per-decision user-facing explanations.

Does explainable AI reduce model accuracy?

Not inherently. XAI methods like SHAP and LIME are post-hoc — they explain an existing model without changing it. If you choose a simpler, more interpretable model architecture (like a decision tree instead of a neural network) to improve explainability, there may be a small accuracy trade-off. In practice, this trade-off is often smaller than assumed, especially on structured tabular data.

Which industries need explainable AI the most in 2026?

Financial services (credit, fraud), healthcare (diagnosis, treatment recommendations), HR and hiring (CV screening, interview scoring), education (adaptive learning, automated grading), and law enforcement (risk assessment tools) all face explicit regulatory requirements for AI explainability in 2026. Any sector where automated decisions affect individual rights or opportunities is in scope.

How do I implement SHAP for my machine learning model?

Install the shap Python library and call shap.TreeExplainer(model) for tree-based models (XGBoost, LightGBM, scikit-learn trees) or shap.Explainer(model) for other model types. Generate SHAP values on your validation or test set, then visualise with shap.summary_plot() for global importance or shap.waterfall_plot() for individual predictions. The official SHAP documentation at shap.readthedocs.io covers all model types with worked examples.

The Cost of Waiting Is Now Larger Than the Cost of Building

The conversation about explainable AI has now moved out of research labs and into boardrooms, legal departments, and product roadmaps. As a result, the teams that treat XAI as infrastructure — built in from the start, monitored continuously, and documented thoroughly — are the ones shipping compliant AI products in 2026. In contrast, the teams treating it as a compliance retrofit are the ones getting fined, delaying launches, and rebuilding models they should have instrumented from day one.

Book a Free Demo at GrowAI

Ready to start your career in data?

Book a free 1-on-1 counselling session with GrowAI. Personalised roadmap, zero pressure.