AI Agents vs Automation: Why Most Teams Are Building the Wrong Thing

Here’s a question worth sitting with: is your AI agent actually an agent?

In 2026, almost every team that says “we’re building AI agents” is actually building automation with an LLM sprinkled on top. That’s not necessarily bad — but if you think you’re building something that reasons and adapts, and you’re not, you’re going to hit a wall. Usually at the worst possible moment.

This distinction matters because the architecture is completely different, the failure modes are completely different, and the skills you need are completely different. Let’s get clear on it.

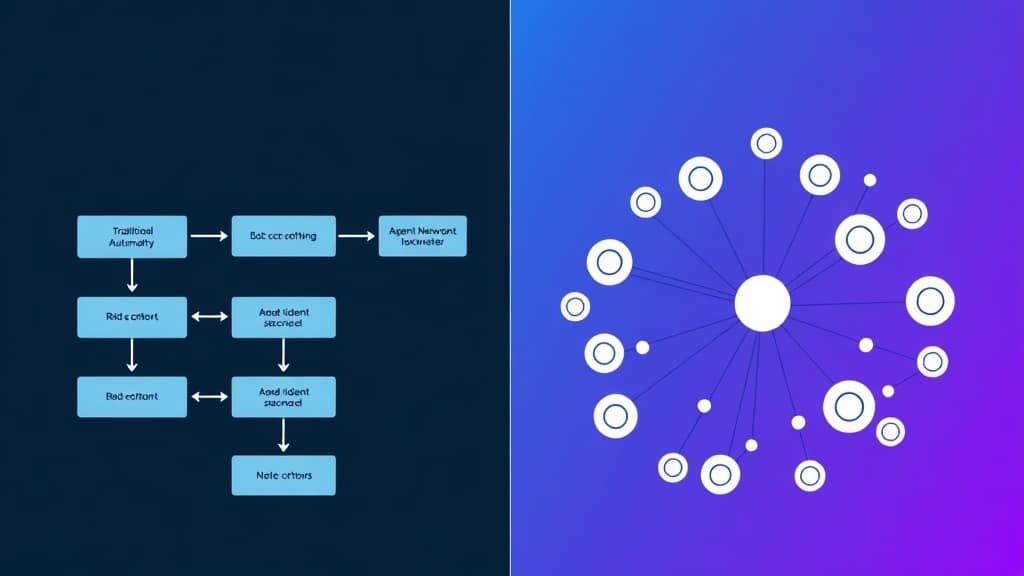

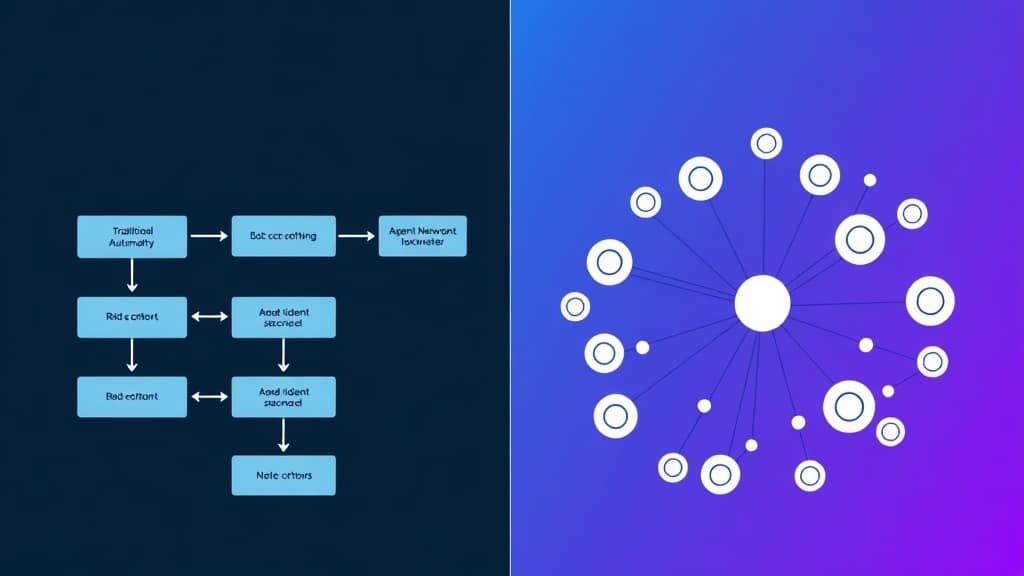

- Automation executes fixed rules. Agents reason through problems and decide what to do next.

- An agent perceives → thinks → acts → observes feedback → repeats. A Zapier flow just does step 1, 2, 3.

- Most ‘AI agents’ in production are actually automated pipelines with an LLM node — and that’s fine for simple tasks.

- Real agents are expensive and slower. Only build one when the task genuinely requires judgment.

- The hybrid approach — agent brain + automation execution — is what mature teams run in 2026.

The Real Difference (Not the Marketing Version)

Traditional automation is deterministic. You define every step, every branch, every condition. When you map out a Zapier flow or n8n workflow, you’re essentially writing a flowchart in advance. It’s powerful, predictable, and cheap to run. The problem is: real-world tasks are messy. They don’t always follow your flowchart.

An AI agent operates differently. Instead of following a pre-written script, it uses a language model to reason about what to do next. It can look at a student’s quiz results, decide to explain the concept differently, search for a relevant example, generate a practice problem, and evaluate the answer — all without you specifying each step in advance.

The technical term for this loop is ReAct (Reasoning + Acting), introduced in a 2022 Google Brain paper. The agent alternates between thinking about the problem and taking actions, using the results of each action to inform its next thought. It’s much closer to how a human tutor works than how a rulebook works.

| Dimension | Traditional Automation | AI Agent |

|---|---|---|

| Decision logic | If/then rules you define upfront | LLM reasons through options at runtime |

| Handles unexpected input | Breaks or routes to error handling | Adapts — finds a path forward |

| Memory across steps | Stateless (unless you build it in) | Maintains context naturally |

| Transparency | Every step is auditable | Can be hard to explain why it chose X |

| Cost per run | Fractions of a paisa | ₹0.05–₹2 depending on LLM used |

| Speed | Milliseconds | Seconds to tens of seconds |

| Best for | High-volume, predictable tasks | Complex, open-ended tasks needing judgment |

A Framework for Choosing (5 Questions)

Before you decide which to build, answer these:

- Is the task fully specifiable? Can you write down every possible scenario and what to do in each? If yes, automate. If no, consider an agent.

- Does the task require judgment or context synthesis? Grading an essay requires judgment. Sending a confirmation email doesn’t.

- How often does the input vary? If 95% of inputs look the same, automate and handle the 5% as exceptions. If every input is different, an agent earns its cost.

- What’s the cost of failure? Agents make mistakes that automation doesn’t — misinterpreting intent, hallucinating a step. If failures are costly (financial, safety), keep humans in the loop or use deterministic automation.

- How much latency can you tolerate? Automation runs in milliseconds. Agents take seconds. For real-time user interactions, this matters a lot.

The sweet spot for most EdTech platforms: use n8n or Zapier for operational workflows (enrollment, payment, notifications) and LangGraph agents for student-facing interactions that need actual intelligence.

How This Actually Works in EdTech

Where automation wins: When a student enrolls, an automation workflow handles the downstream work — sending the welcome email, creating their account in the LMS, adding them to the right cohort Slack channel, updating the CRM, generating the invoice. None of this requires AI judgment. It happens the same way every time. Running this as an “AI agent” would cost 50x more and be slower with no quality benefit.

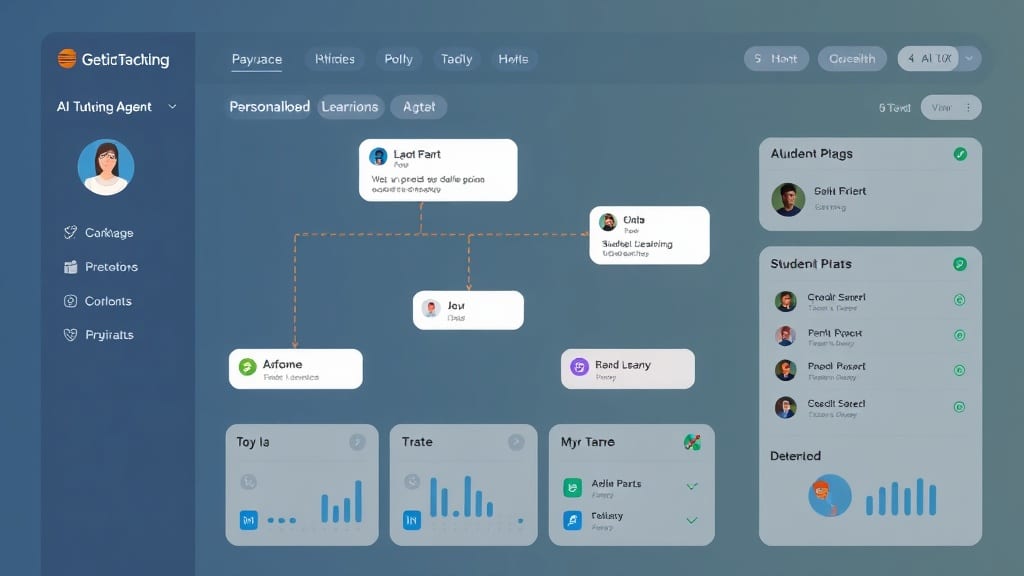

Where agents win: When that same student gets stuck on a statistics concept at 11pm and opens the chat interface. The agent reads their recent quiz history, figures out where the confusion is, decides whether to re-explain or give an example, generates a custom practice problem, and evaluates the student’s answer. No static flowchart could handle this — there are too many possible states.

Free 2026 Career Roadmap PDF

The exact SQL + Python + Power BI path our students use to land Rs. 8-15 LPA data roles. Free download.

The hybrid that most good teams run: The agent decides what to do. Automation executes it. The tutoring agent might decide “send this student a follow-up reminder tomorrow morning” — that decision gets handed off to a Zapier action that actually sends the email. The agent doesn’t need to handle email delivery; automation is better at that.

Case Study: The “AI Agent” That Was Actually Automation

A Bengaluru-based upskilling platform came to us after their “AI agent” project had stalled for four months. The team had built something impressive-looking: a LangChain pipeline with GPT-4o that generated personalized study plans.

The problem? It was a chain, not an agent. Every time the input varied from their training cases — a student with a non-standard background, a course that launched after the system was built — the whole thing broke in confusing ways. The LLM was being used to format output, not to make decisions.

We rebuilt it as a proper LangGraph agent with three nodes: assess (reads student data, identifies gaps), plan (reasons about learning sequence), and generate (creates the actual plan). We added a fourth node that detected when the plan quality was low and looped back to reassess with different context.

Result: Plan quality (rated by the curriculum team) went from 61% acceptable to 89% acceptable. The rebuild took 3 weeks. The original approach had consumed 4 months of engineering time.

Mistakes Worth Avoiding

- Calling everything an “agent” — creates wrong expectations and wrong architecture. Name your systems accurately: this is a pipeline, this is an agent, this is automation.

- Building agents for tasks that don’t need them — a common pattern in teams excited about LangChain. Agents cost more, fail in harder-to-debug ways, and run slower. Use them only where intelligence is genuinely required.

- Forgetting about observability — agents fail silently in ways automation doesn’t. You need traces, logs, and eval pipelines from day one. LangSmith or Langfuse, both free for development.

FAQ

Can I replace Zapier with an AI agent?

Not economically for structured workflows. An n8n automation that handles 10,000 events per day costs a few dollars. The same volume through a GPT-4o agent would cost hundreds of dollars. Use agents where judgment is needed; automation where it’s not.

What framework should I learn first?

LangGraph for production agents (explicit state management, controllable). LangChain for prototyping and learning. Don’t start with AutoGPT — it’s fine for demos but unreliable in production.

How do I know if my use case needs an agent?

Simple test: can you write out every possible step the system needs to take in a flowchart? If yes, automate it. If the answer is “it depends on what the input says,” you need an agent.

Are AI agents reliable enough for production?

With proper guardrails, human-in-the-loop checkpoints, and evals, yes. Without those: no. The key is matching agent autonomy to task criticality.

The Bottom Line

The question isn’t “should I build agents or automation?” — it’s “which of my tasks actually require intelligence?” Start there, and the architecture follows naturally. Most of what you’re automating doesn’t need AI judgment. The parts that do are genuinely better with agents.

Build the right tool for the right job. Your future self debugging a 3am production incident will thank you.

Want to build AI systems that actually work in production?

Live mentorship • Real projects • Placement support

Ready to start your career in data?

Book a free 1-on-1 counselling session with GrowAI. Personalised roadmap, zero pressure.